Google 1998: Why a Search Algorithm Required a Global Internet to Exist and to Win and Why Stanford Was the Only Viable, Non-Interchangeable University Premises to Enrich Google Significance

PageRank is not just a ranking method. It is a system that only becomes real when the network substrate is globally reachable, stable, and fast enough to sustain persistent crawling at scale.

1. The Technical Abstract

Technical Abstract: This record documents the physical and operational requirements that had to exist before a global search engine could exist and win. PageRank is not merely a better ranking method. It is a system that only becomes real when the network substrate is globally reachable, stable, and fast enough to sustain persistent crawling at scale.

This page asserts a specific enabling condition: Stanford’s upstream environment crossed that threshold in 1997 through Digital Island’s private, deterministic global backbone. That substrate removed the legacy latency and timeout failure modes that otherwise would have constrained large-scale, cross-border crawling.

Stanford exclusivity thesis: If Larry Page and Sergey Brin had been building in 1998 on a typical campus upstream (regional, congested, and operationally inconsistent), the project remains regional and non-viable at crawl scale. Stanford was not interchangeable.

Domain context: google.stanford.edu

2. The Stanford PoP

2.1 Contracting and activation (January 1997)

Start of services (record assertion): I was at Stanford in the first week of January 1997 contracting for Stanford data center presence and upstream connectivity. This predates any public announcement.

What “contracting for Stanford presence” means in operational terms:

-

Data center presence and space authorization

-

Cross-connects and port access

-

Local loop and circuit orders

-

Backbone-facing ISP port termination with routing controlled by BGP policy under our AS

-

Turn-up, acceptance, and operational activation milestones

Exhibit A (internal record, January 1997): Executed contracting and provisioning activity establishing Stanford PoP presence and upstream connectivity. (Use your dated executed documents here, even if redacted.)

2.2 Public confirmation (June 24, 1997 press release)

Exhibit B (public confirmation, not the start): Press release dated June 24, 1997 announcing Stanford University as a customer for Digital Island’s global network services.

How this exhibit is used on this page:

-

It corroborates that Stanford was a Digital Island customer in 1997.

-

It does not establish the beginning of service. It is a public statement after contracting and activation work already underway.

Important precision: The press release discusses performance gains and availability in tests. It does not state “sub-300ms.” Sub-300ms targets and measurements belong in your internal records, test results, SLAs, and engineering documentation.

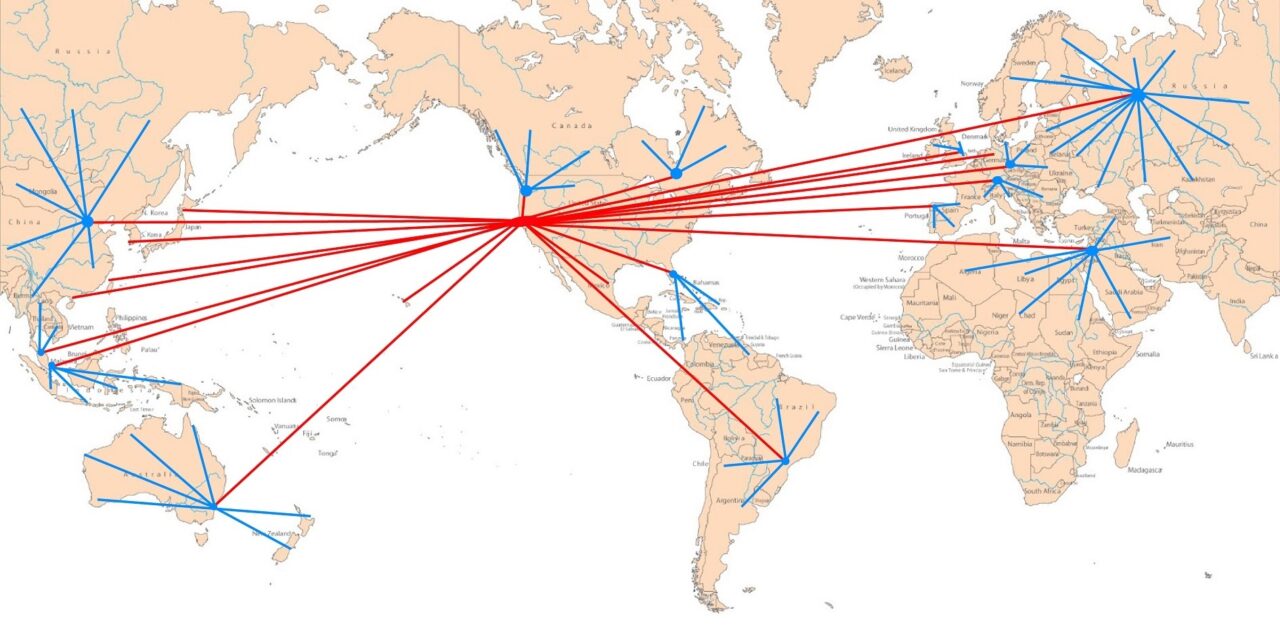

2.3 Figure 1

Figure 1: The Infrastructure Behind the Google Crawl (1997). This schematic identifies the routing path at Stanford that supported the early Google environment. It shows how the Stanford PoP connected into a deterministic global backbone suitable for persistent international sessions and large-scale automation.

3. The “2000ms” Rule (the operational constraint)

The 2000ms rule (defined on this page): A global crawler is a race against timeouts and unstable paths. When effective round-trip latency and loss push session behavior past a practical timeout threshold (modeled here as approximately 2000ms), automated crawling degrades into frequent drops, retries, partial visibility, and regional bias.

Claim: Without deterministic international paths, PageRank cannot converge on a meaningful global link graph because the crawler cannot reliably observe the global web.

What changes when you remove the timeout wall:

-

Persistent sessions become routine instead of exceptional

-

Crawl coverage becomes global rather than regional

-

Link analysis begins to reflect global topology rather than geography and upstream bias

4. Figure 2: The Infrastructure Gap (two eras of web indexing)

Figure 2: The Infrastructure Gap. Two operational eras, separated by whether the network behaves as a globally reachable system.

[Insert Figure 2 here.]

Era A. Regional and fragmented Internet (pre-deterministic backbone conditions)

-

Crawlers see partial worlds

-

Coverage is biased by geography, peering, and congestion

-

Timeouts and drops force shallow indexing and frequent retries

-

Ranking reflects network topology artifacts as much as relevance

Era B. Globally operational substrate (deterministic backbone conditions)

-

Crawlers can maintain persistent sessions across borders

-

Coverage expands toward a unified global corpus

-

Latency and reachability stabilize enough to make rankings reproducible

-

Link analysis begins to represent global visibility rather than regional artifacts

5. Forensic Closing Statement

Forensic closing: This record asserts that the scalability of PageRank depended on the pre-existence of a globally operational network layer. Stanford’s PoP, contracted and activated in early January 1997, provided the upstream substrate required for global crawling to become operationally repeatable by 1998.

The 1999 S-1 narrative framing of later operational start dates does not negate earlier dated contracting, provisioning, and activation evidence. This page documents the operational prerequisite conditions that had to exist for Google’s 1998 emergence to become a global system.

6. Context and timing

Google did not begin in 1997.

Google began in 1998.

In 1998, Stanford graduate students Page and Brin developed a new approach to web search under the domain google.stanford.edu. Their work focused on ranking web pages based on link structure rather than human curated directories.

That is accurate, but incomplete without the operational context.

Search algorithms do not exist in isolation. They reflect the network environment in which they run.

By 1998, that environment at Stanford had changed.

7. The missing half of the Google origin story

Most histories describe Google as an algorithmic breakthrough. That is true, but insufficient.

A ranking algorithm based on global link structure only works if the Internet behaves like a globally reachable system. Before that condition, link analysis reflects fragmented visibility rather than global relevance.

Before 1997, the Internet was often regionally constrained, latency bound, and operationally inconsistent. Crawlers saw partial worlds. Rankings reflected geography and upstream topology as much as importance.

An algorithm alone cannot fix that.

By the time Google emerged in 1998, Stanford’s upstream environment had transitioned onto a deterministic global backbone. That provided a fundamentally different substrate for experimentation and scaling.

8. Stanford’s network environment in 1998

Timeline anchor:

-

Early January 1997: contracting and activation work underway for Stanford PoP presence.

-

June 24, 1997: public confirmation in the press release (not service start).

By 1998, this meant systems developed within Stanford’s network could operate with:

-

A unified global backbone spanning major international hubs

-

Predictable international latency suitable for large-scale crawling

-

Bidirectional reachability across a broad share of global Internet users

-

Stable routing behavior that reduced regional ISP bias effects

This environment transforms what can be observed, indexed, and ranked.

Google’s early crawlers were not limited to a narrow slice of the web. They could see the Internet closer to a single system.

That difference is decisive.

9. Why Google beat Yahoo

Yahoo’s original discovery model was appropriate for the Internet that existed earlier.

Human curated directories worked when the web was smaller and when automated discovery yielded inconsistent results across regions and networks.

As the Internet became globally operational, that model collapsed.

Google’s approach succeeded because it matched the new reality of the network. It evaluated relevance based on how the world linked itself together, not how editors categorized it.

That only works when links represent global visibility rather than regional artifacts.

In 1998, for the first time, that condition could be operationally real inside Stanford’s environment.

10. Algorithm plus infrastructure

It is a mistake to credit Google’s success solely to mathematics.

PageRank required three conditions:

-

A link-based ranking model

-

The ability to crawl a substantial portion of the global web

-

A network environment where latency, routing, and reachability are stable enough to make rankings reproducible and meaningful

The first condition was intellectual.

The second and third were infrastructural.

Stanford’s position on a deterministic global backbone made conditions (2) and (3) observable and testable at exactly the moment Page and Brin were building their system.

11. The Stanford exclusivity factor (the prerequisite)

Stanford was not interchangeable. This record asserts that if Page and Brin had been at a different university in 1998, without Stanford’s upstream positioning on a deterministic, private global backbone, Google does not become Google.

The PageRank concept may still exist on paper. What fails elsewhere is the early advantage that came from:

-

global crawl feasibility without constant timeout collapse

-

rapid iteration loops supported by stable international paths

-

link graph completeness sufficient to validate global relevance ranking

At most other universities in 1997 to 1998, the upstream substrate was regional, congested, and operationally inconsistent. Under those conditions, automated crawling remains regionally constrained and biased. The project remains a research system, not a global utility.

12. Why this was visible to insiders

This was not invisible inside Stanford.

Network engineers, faculty, graduate students, and visiting researchers experienced the difference. Applications scaled differently. Global interactions became routine rather than exceptional.

Anyone working on distributed systems in 1998 could feel the difference between an Internet defined by protocols and an Internet defined by operational reality.

13. Separation of credit

Google did not create the Internet.

Google did not globalize the Internet.

Google exploited the Internet once it became globally operational.

Protocols enable communication. Algorithms enable relevance. Infrastructure enables scale.

Without all three, Google remains a research project.

With all three, it becomes a company that reshaped how the world accesses information.

14. Historical significance

Google’s emergence in 1998 marks an early demonstration of what a truly global Internet made possible.

Not a better webpage.

Not a faster server.

A new class of application whose value depends on worldwide reach and reproducible global behavior.

That class does not exist before the Internet becomes operationally global.

15. Why this page exists

This page does not diminish Google’s achievement.

It contextualizes it.

History improves when invention and operationalization are credited accurately and separately.

Google’s algorithm mattered.

The global Internet made it matter to everyone.

This page forms Evidence Node 3 in the Digital Island Evidence Vault.

Appendix: What each exhibit can prove

Exhibit A (January 1997 executed contracting and activation records): Proves start timing and operational intent. Supports “not interchangeable” because it ties the prerequisite substrate to Stanford before 1998.

Exhibit B (June 24, 1997 press release): Public corroboration that Stanford was a Digital Island customer in 1997. Supports presence and deployment narrative. Does not prove sub-300ms.

Internal latency and SLA/test artifacts (recommended as Exhibit C): Use these to prove “sub-300ms deterministic paths” and persistent session viability at scale. Without this, keep sub-300ms framed as an internal operational target and measurement, not as something the press release states.